Summary

- Revenue Surge & Profit Growth: Alphabet’s revenue crossed $400 billion with net income up 30% to $34.5 billion, showing core engines (Ads and Cloud) remain highly profitable.

- The Spending Shock: Google’s $185 billion AI capex forecast for 2026 is nearly five times net income — a manifesto for compute sovereignty, not a budget line.

- Competitive Lens: Microsoft, Google’s closest rival, must decide whether to match this spending shock or position itself as the disciplined alternative, defining the AI infrastructure frontier.

- Investor Takeaway: Margin expansion is dead as a primary metric. Google is trading short‑term efficiency for long‑term sovereignty, aiming to become the Central Bank of Intelligence.

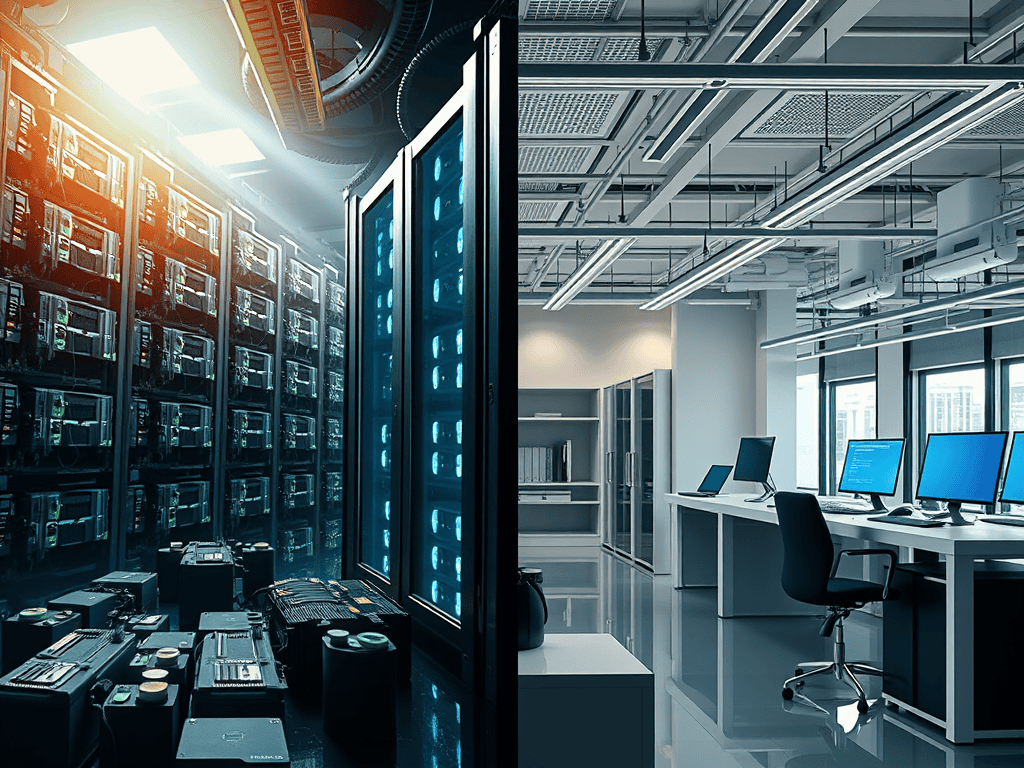

Alphabet’s annual revenue has officially crossed the $400 billion mark. Net income rose nearly 30% to $34.5 billion, proving that Google’s core engines — Ads and Cloud — are not just surviving; they are funding the war for AI sovereignty. The advertising machine and cloud contracts are underwriting the $185B build‑out of data centers and TPU silicon — the infrastructure war that decides who owns the compute layer of the global economy.

Analytical Takeaways

- Capex dwarfs net income — nearly five times larger — raising questions about margin sustainability.

- Profits are rising in tandem with revenue, showing efficiency in Google’s core businesses.

- Investor tension is visible: shares dipped ~6% on the announcement, reflecting unease about infrastructure war spending without a clear ROI horizon.

- Strategic bet: Google is deliberately trading short‑term margin expansion for long‑term Compute Sovereignty.

- Competitive lens: Microsoft, Google’s closest rival, must now decide whether to match the spending shock or position itself as the disciplined alternative. Either way, the duopoly is defining the frontier.

The Spending Shock

Google just reset the scoreboard. A $185 billion capex forecast for 2026 isn’t a budget; it’s a manifesto. This scale of investment — data centers, custom TPU silicon, and generative AI platforms — is the Data Cathedral in physical form, a build‑out rivaling national power grids.

The math is stark: capex is now nearly 5x net income. Google is outspending Microsoft and Meta in absolute infrastructure terms, positioning itself as the pace‑setter in the AI sovereignty race.

Investor Takeaway

We are witnessing the death of “margin expansion” as a primary metric. Alphabet is deliberately sacrificing short‑term efficiency to secure Compute Sovereignty.

The risk is immediate: Wall Street recoils at infrastructure wars without a clear ROI horizon, preferring margin discipline to sovereignty bets. Yet the truth is unavoidable — in 2026, the company that owns the most compute wins the right to tax the global economy. Google isn’t spending to stay relevant; they are spending to become the Central Bank of Intelligence.

Subscribe to Truth Cartographer — because here we map the borders of power, the engines of capital, and the infrastructures of the future.

Further reading: